Understanding Modern Cloud Architecture on AWS: Container Orchestration with ECS

Table of Contents

- The Video: Resource Management with Docker and ECS

- Introduction

- The Infrastructure So Far...

- AWS Elastic Container Service, aka ECS

- Conclusion

- Other Posts in This Series

The Video: Resource Management with Docker and ECS

Introduction

In the interlude we covered the entire technology path from plain machines, to clusters, and ended on how container orchestration works. In essence, container orchestration allows you batch together a bunch of machines, treat them as if they're one, and then deploy containers to them. It makes it so that it's as if you're managing multiple containers on ONE machine, which is far simpler. Handling scaling becomes a simple process of adding more machines to your cluster and launching more containers into them. And when I say "simple" I mean simple relative to the alternative ways of doing things.

In this space there are a variety of tools that one can use to bring this into reality. However, the one we're going to focus on in this post is AWS Elastic Container Service. The primary reason is that this entire series is about architecture ON AWS...and so working with a service that's a first class citizen on AWS make sense. The second reason is that, unlike something like AWS EKS, ECS doesn't cost anything extra - you only pay for the underlying resources. The third reason is that Kubernetes is practically a knowledge black hole, and thought it's the dominant choice for large teams, something like ECS will cover all levels of scale as well (otherwise folks like Riot Games wouldn't have been able to handle 100 million monthly active users on it).

ECS handles so much of the plumbing required to get a container orchestration cluster up and running, it's something that both a one-person-army and enterprise-level teams can make use of. It also helps that I have a variety of guides on it that I can confidently point you to, whereas with most the other solutions, there is no one definitive source of education. Granted that's my opinion, but let's face it, you're probably not looking for the documentation.

The Infrastructure So Far...

AWS Elastic Container Service, aka ECS

Your company has come back to you and said, "Oh hey, we have a new admin application we'd like to launch." Now we've got to fit that into our cloud architecture we've been working on.

As we talked about previously, we could just spin up an entirely new version of our cloud architecture. That would mean creating an entirely new set of load balanced servers with this new admin application. We would then have two load balancers we'd send traffic to - one for the user-facing application and one for the admin-facing application.

That's fine and it would work...but it wouldn't be all that fun, would it? We're defining a pattern where we'll need a separate set of servers and load balancers for any new application or service we want to add. Don't get me wrong, this is way better than putting each application on its own overpowered machine. But it does take us down the path of varying server configurations.

"But Cole, I'd just use the same server and load balancer configuration."

Right, but if that's the case, why run a separate set of servers for this new application? If they're the same, we should just put them on the same machine right?

"Yes, but managing multiple user-facing applications on a machine is difficult. Dependencies clash, networking rules overlap, and patching them is like Russian roulette..."

Well, that's what our new friend Docker and its almighty conductor, Container Orchestration will help us with.

Thanks to the previous sections on Containers and Container Orchestration, we know that with Docker we can put multiple things onto one host machine without having to worry about collision between the applications and their needs. We know that it'll be easier to manage and configure them since containers are isolated. And, we also know that if we ever want to move the applications in the future, all we have to make sure of is that our new host has Docker on it.

This sounds fabulous but looking at it more closely...how exactly do we get traffic into our containers? How do we manage them across multiple EC2 instances? How do we connect our load balancer to them? And with the load balancer, how does it know to split up traffic between containers?

Yes, there's a lot of questions here, and no, Docker does NOT solve them all for us BUT AWS Elastic Container Service DOES.

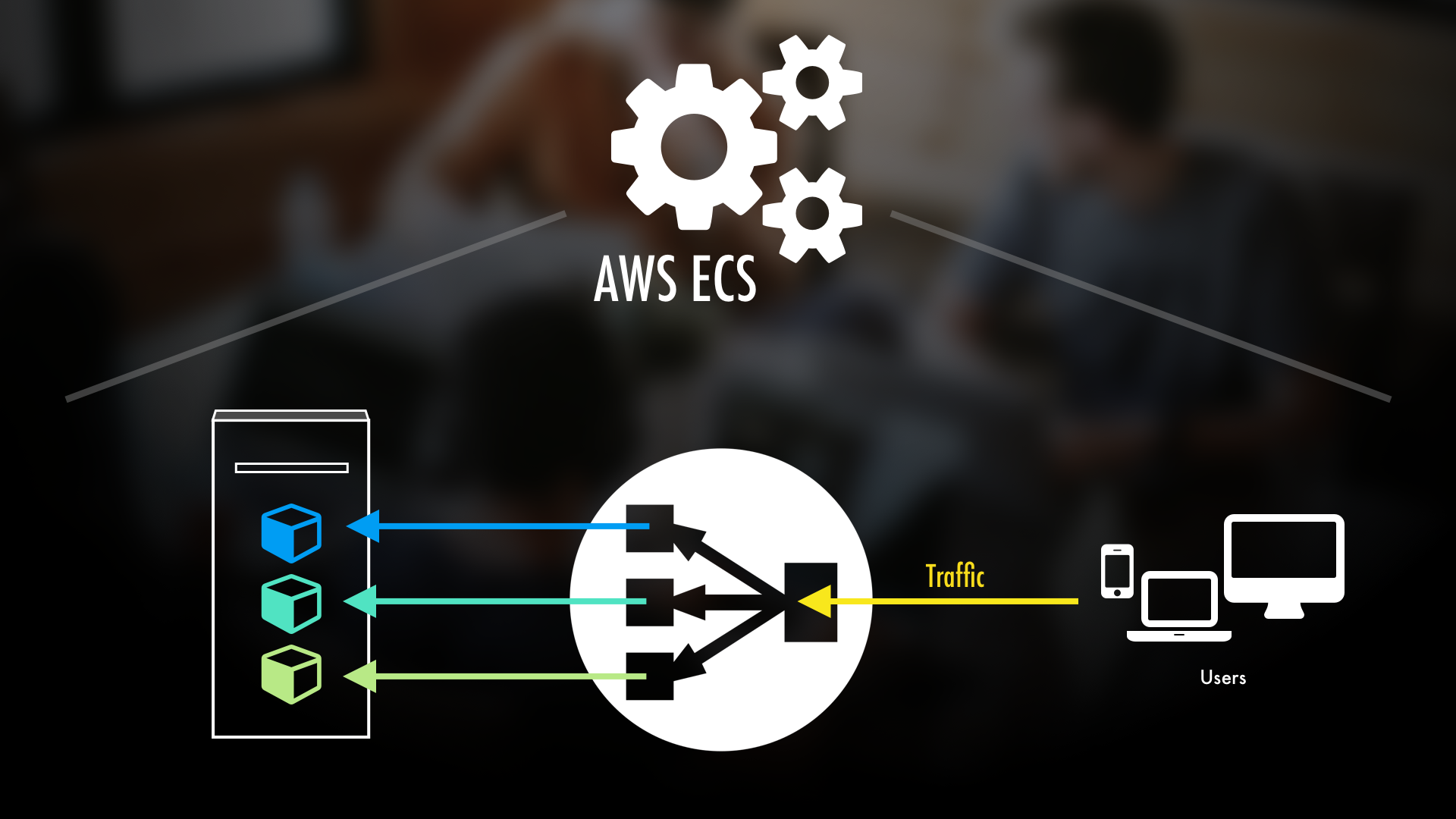

AWS Elastic Container Service, aka ECS, allows us to do a simple workflow:

- Make the Docker Image.

- Give that image to AWS ECS.

- Make the EC2 Instances and tell ECS about them (resulting in an ECS cluster).

- Make an Application Load Balancer and tell ECS about it (so ECS will then manage routing traffic to our individual containers).

- Done.

And that's it. With that done, ECS will take our Docker image, create containers from it, and put them onto our EC2 instances. Using an Application Load Balancer (which is just a type of load balancer available to us in AWS), it will also handle spreading traffic across our various containers. Plus, when using an Application Load Balancer, we can even do things like route traffic based on the URL. The beauty of it all is that we can have our containers all on the same EC2 instances which means we're making the most of the resources that we have.

Furthermore, it gives us all sorts of monitoring and logging capabilities. We'll not only be able to see the status of all of our containers, but also metrics about the servers running the containers. We can set up rules to manage automatically scaling our application. We can set up alarms to go off if our server resources start getting low.

And what about if we want to update the containers? Simple: just give ECS the new Docker image and it'll take care of redeploying the new containers for us...WITHOUT interrupting traffic.

And so, because of that, our next step in making our architecture would be to convert our cloud architecture to use Docker and AWS ECS. We would do this by:

- Turning the original application into a Docker image.

- Turning the new application into a Docker image.

- Making sure Docker and ECS are available on our EC2 instances.

- And, Deploying the containers using AWS ECS.

With that done, most of our interactions, in terms of updating our application, will be done through ECS now. Since all the EC2 instances really need is Docker and the things required to work with ECS, we can mostly leave them alone. And the best part is that if we want to deploy MORE applications and services later, we can just follow this pattern again.

What about other container orchestration solutions?

Yes, there are indeed a great number of other ways you can implement this. Things like Kubernetes, EKS, Nomad, Marathon, Titus, and the list goes on. The thing to recognize is whether or not you want a MANAGED container orchestration service, like ECS, or if you want to build out the whole thing yourself, as with Kubernetes.

Managed Container Orchestrators

A MANAGED container orchestration solution is going to set up all of the nuts and bolts for you. It's going to abstract away deployments, service discovery, managing servers in the cluster, logging, monitoring, security, and either more, or less, depending upon which solution you pick. The reason I tend to swing towards ECS is because...

a) It's a first class citizen in AWS. So it plugs in with everything else in AWS easily.

b) There's no extra cost. (EKS is $0.10/hr per cluster).

Plus, if I'm going to use something like Kubernetes, I'm probably going to do it for the added control that you lose when going with managed solutions. Just to clarify though: unless you're a massive team, you probably don't need that extra control.

Manual Container Orchestrators

Ah Kubernetes. Do you need it or do you not? Find the closest tech worker you can and, with fervor, announce one or the other. "You do NOT need Kubernetes." or "You ABSOLUTELY need Kubernetes!" Tell me how that firefight turns out.

So what DOES Kubernetes do? Well, everything we've talked about, and more.

"Oh my...well then I have to use it..."

Wait, wait, wait. There's a catch. You've got to set almost all of it up manually. There's also the entire management factor of it that comes into play. Understand that this astonishing piece of technology is the successor to Google's internal cluster manager "Borg." And as you can probably infer, if it was meant to run something at the scale of Google...then managing it is no small thing.

That said, it is an awesome piece of technology. It pretty much comes with everything you'd need to manage a cluster-based architecture. Not only can it do everything we've mentioned, but it also gets neat features like namespacing, role based access control (rbac), or extend its functionality beyond the basics. It also does all of this IN the cluster, instead of having to work with things outside of it (i.e. AWS IAM for RBAC).

But whether or not you should use it isn't just a question of "can it do what I want?" Yes, having an entire movie theater as your entertainment system is a neat idea...but can you handle all of the maintenance? And geez, what if you want to change the channel? Now you've got to walk out of the current theater you're in, change it, and come back. That or create a custom remote controller to do it for you (get it K8'ers?).

Conclusion

Now a disclaimer: there is MUCH more to ECS, and container orchestration, than what we've covered here. But again, if you'd like to learn more, you can check out my free series on the whole big topic at The Hitchhiker's Video Guide to AWS ECS and Docker.

Interestingly enough, this more-or-less wraps up our 50,000 ft view of CORE pieces of cloud architecture on AWS. What we've talked about thus far is the MEAT. The tasty meat on the sandwich that makes it so.

What we covered above handles both scaling and adding new services in the general way: if we need more scale, add more EC2 instances, and ECS will worry about putting more containers on the new machines. If we need more services and/or applications? Just make new Docker images and hand them to ECS.

However...what REALLY makes a sandwich a world-wide phenomenon? Just meat and bread? Nope. It's all of the other things around it.

And so, for the rest of this series, we're really going to be diving into how to best optimize our architecture. We will discuss some big questions like: How can we cut down on requests to containers? How can we make them lighter so that we can fit more of them on an instance? And, of course, how can we make the user experience the fastest and best that it can be? We'll tackle a great way to do that in the next series post on Storage and Content Delivery Networks.

Other Posts in This Series

If you're enjoying this series and finding it useful, be sure to check out the rest of the blog posts in it! The links below will take you to the other posts in the Understanding Modern Cloud Architecture series here on Tech Guides and Thoughts so you can continue building your infrastructure along with me.

- Understanding Modern Cloud Architecture on AWS: A Concepts Series

- Understanding Modern Cloud Architecture on AWS: Server Fleets and Databases

- Understanding Modern Cloud Architecture on AWS: Docker and Containers

- Interlude - From Plain Machines to Container Orchestration: A Complete Explanation

- Understanding Modern Cloud Architecture on AWS: Container Orchestration with ECS

- Understanding Modern Cloud Architecture on AWS: Storage and Content Delivery Networks

Enjoy Posts Like These? Sign up to my mailing list!

J Cole Morrison

http://start.jcolemorrison.comDeveloper Advocate @HashiCorp, DevOps Enthusiast, Startup Lover, Teaching at awsdevops.io